Chat overview

Chat in Visual Studio Code enables you to use natural language for AI-powered coding assistance. Ask questions about your code, get help understanding complex logic, generate new features, fix bugs, and more, all through a conversational interface. This article provides an overview of the chat surfaces, how to add context, choose a language model, write effective prompts, and review AI-generated changes.

Prerequisites

-

Access to GitHub Copilot. If you don't have a subscription, you can use Copilot for free by signing up for the Copilot Free plan.

ImportantStarting April 20, 2026, new sign-ups for Copilot Pro, Copilot Pro+, and student plans are temporarily paused. Additionally, we are tightening weekly usage limits. See GitHub Copilot usage limits.

Access chat in VS Code

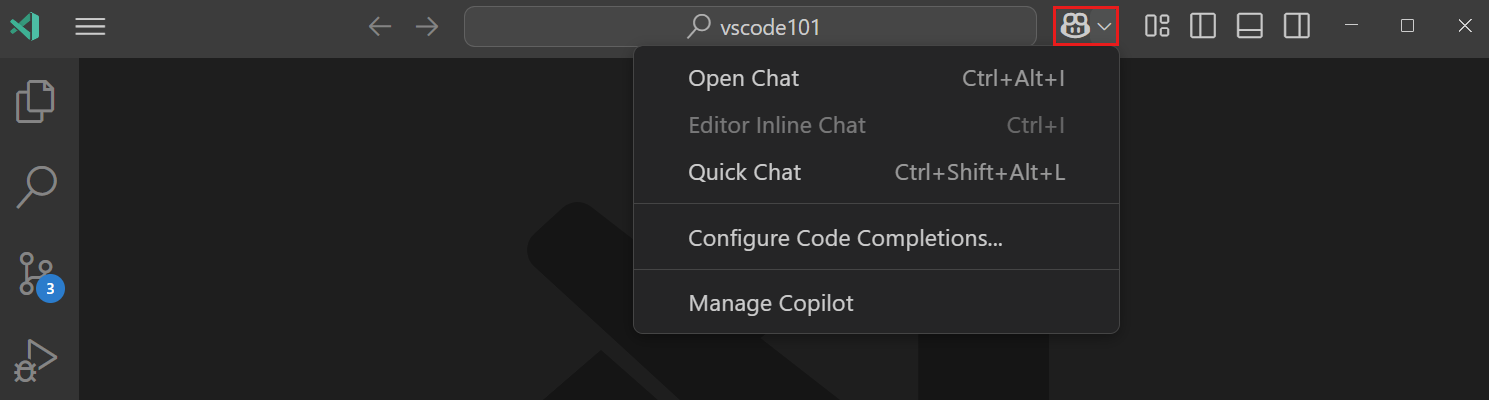

VS Code provides multiple ways to start an AI chat conversation, each optimized for different workflows. Use the Chat menu in the VS Code title bar or the corresponding keyboard shortcuts.

| Surface | Shortcut | Best for | Learn more |

|---|---|---|---|

| Chat view | ⌃⌘I (Windows, Linux Ctrl+Alt+I) | Multi-turn conversations, agentic workflows, multi-file edits. Also available as an editor tab or separate window. | Chat sessions |

| Inline chat | ⌘I (Windows, Linux Ctrl+I) | In-place code edits and terminal command suggestions. | Inline chat |

| Quick chat | ⇧⌥⌘L (Windows, Linux Ctrl+Shift+Alt+L) | Quick questions without leaving your current view. Opens a lightweight chat panel at the top of the editor. | Quick Chat |

| Command line | code chat |

Starting chat from outside VS Code. | CLI docs |

Submit your first prompt

To see how chat works, try creating a basic app:

-

Open the Chat view by pressing ⌃⌘I (Windows, Linux Ctrl+Alt+I) or selecting Chat from the VS Code title bar.

-

Select where you want to run the agent by using the Agent Target dropdown. For example, select Local to run the agent interactively in the editor with full access to your workspace, tools, and models.

-

Select an agent from the agent picker. For example, select Agent to let chat autonomously determine what needs to be done and make changes to your workspace. Learn more about choosing an agent.

-

Type the following prompt in the chat input field and press Enter to submit it:

Create a basic calculator app with HTML, CSS, and JavaScriptThe agent applies changes directly to your workspace and might also run terminal commands, for example, to install dependencies or run build scripts.

-

In the editor, review the suggested changes and choose to keep or discard them.

For a full hands-on walkthrough, follow the agents tutorial.

Configure your chat session

When you start a chat session, the following choices shape how the AI responds:

- Session type: determines where the agent runs (locally, in the background, or in the cloud). Learn more about agent types.

- Agent: determines the role or persona of the AI, such as Agent, Plan, or Ask. Learn more about choosing an agent.

- Permission level: controls how much autonomy the agent has over tool approvals. Learn more about permission levels.

- Language model: determines which AI model powers the conversation. Learn more about language models in VS Code.

Add context to your prompts

Providing the right context helps the AI generate more relevant and accurate responses.

-

Implicit context: VS Code automatically includes the active file, your current selection, and the file name as context. When you use agents, the agent decides autonomously if additional context is needed.

-

#-mentions: type#in the chat input to explicitly reference files (#file), folders, symbols, your codebase (#codebase), terminal output (#terminalSelection), or tools like#fetch. -

@-mentions: type@to invoke specialized chat participants like@vscodeor@terminal, each optimized for their respective domain. -

Vision: attach images, such as screenshots or UI mockups, as context for your prompt.

-

Browser elements (Experimental): select elements from the integrated browser to add HTML, CSS, and screenshot context to your prompt.

Learn more about managing context for AI.

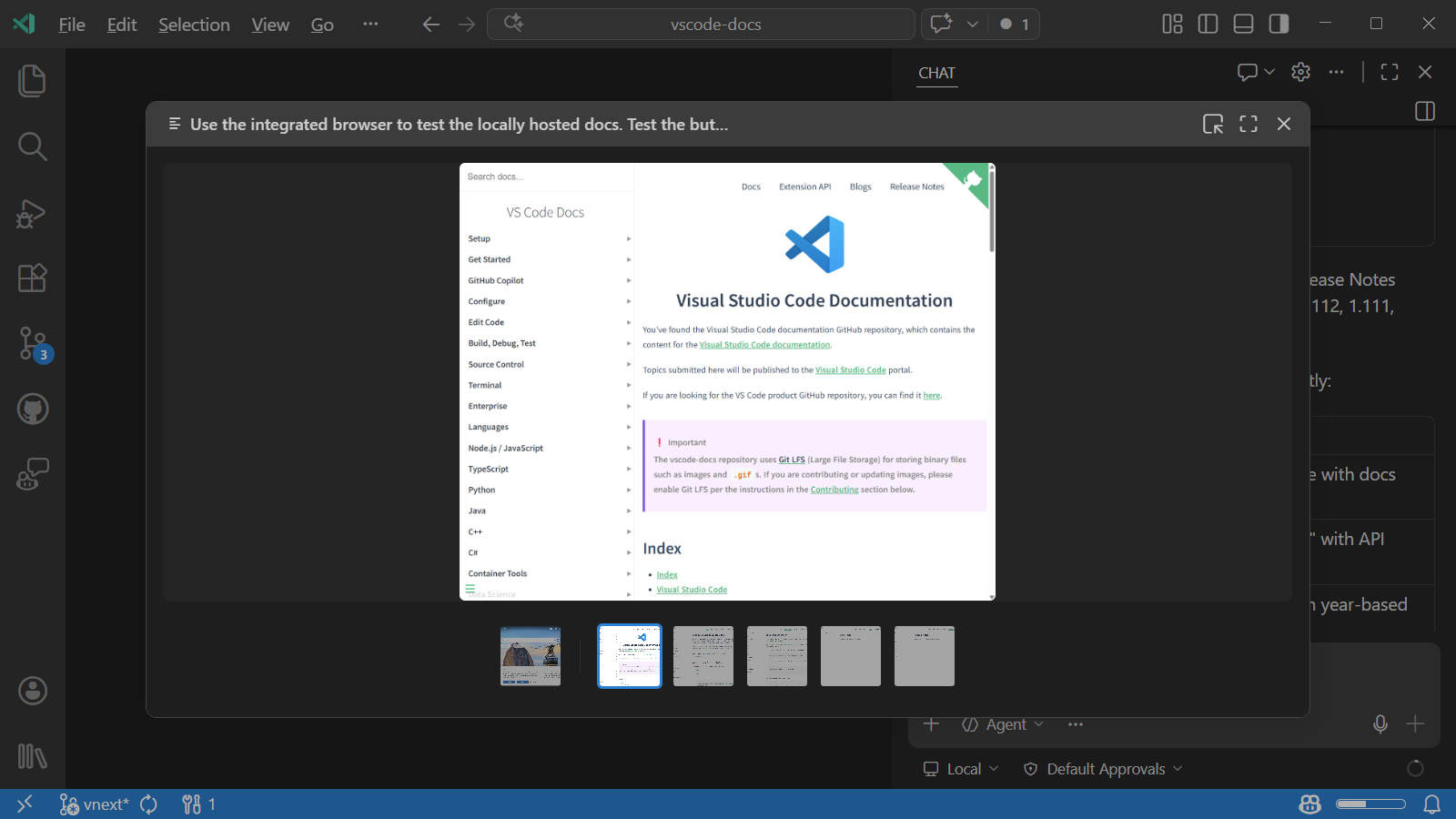

Image carousel (Experimental)

When imageCarousel.chat.enabled is enabled, you can select images or videos in chat responses to open a dedicated carousel view. Media files from tool results (such as the integrated browser, Playwright, or other MCP servers) and inlined in assistant messages are all accessible from the carousel.

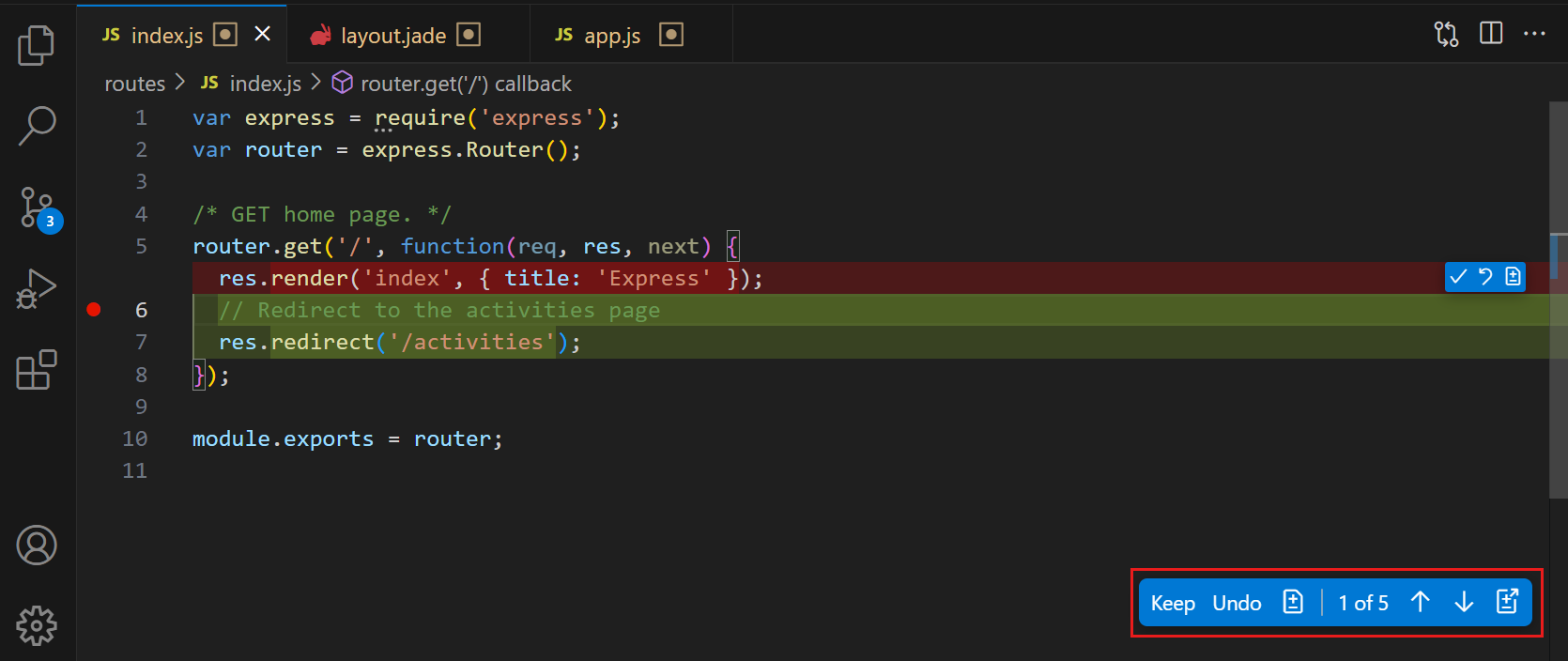

Review and manage changes

After the AI makes changes to your files, review and accept or discard them.

-

Review inline diffs: open a changed file to see inline diffs of the applied changes. Use the editor overlay controls to navigate between edits and Keep or Undo individual changes. For more information, see reviewing AI-generated code edits.

-

Use checkpoints: VS Code can automatically create snapshots of your files at key points during chat interactions, enabling you to roll back to a previous state. For more information, see checkpoints and editing requests.

-

Stage to accept: staging your changes in the Source Control view automatically accepts any pending edits. Discarding changes also discards pending edits.

Get better responses

Chat provides several ways to improve the quality and relevance of AI responses:

-

Write effective prompts: be specific about what you want, reference relevant files and symbols, and use

/commands for common tasks. Get inspired by prompt examples or review the full prompt engineering guide. -

Customize the AI: tailor the AI's behavior to your project by adding custom instructions, creating reusable prompt files, or building custom agents for specialized workflows. For example, create a "Code Reviewer" agent that provides feedback on code quality and adherence to your team's coding standards.

-

Extend with tools: connect MCP servers or install extensions that contribute tools to give the agent access to external services, databases, or APIs.

For more information, see customizing AI in VS Code.

Troubleshoot chat interactions

Use Agent Logs and the Chat Debug view to inspect what happens when you send a prompt. Agent Logs shows a chronological event log of tool calls, LLM requests, and prompt file discovery. The Chat Debug view shows the raw system prompt, user prompt, context, and tool payloads for each interaction. These tools are useful for understanding why the AI responded in a certain way or for troubleshooting unexpected results.